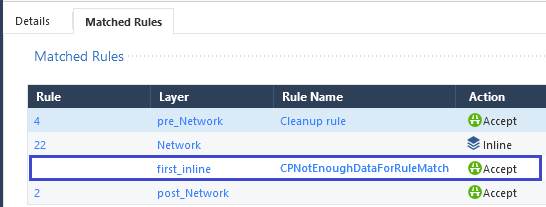

$ for i in `seq 6` do nc localhost 1024 done Let's see what happens when we establish a couple of connections to each: $. While these programs look similar, their behavior differs subtly. We can avoid the usual thundering-herd issue by using the EPOLLEXCLUSIVE flag. The worker will call non-blocking accept() only when epoll reports new connections. The intention is to have a dedicated epoll in each worker process. The second model should be called epoll-and-accept: sd = bind(('127.0.0.1', 1024))Įd.register(sd, EPOLLIN | EPOLLEXCLUSIVE) The idea is to share an accept queue across processes, by calling blocking accept() from multiple workers concurrently. Let's call the first one blocking-accept. Not many people realize that there are two different ways of spreading the accept() new connection load across multiple processes. In this blog post we'll describe a specific problem with this model, but let's start from the beginning.

More on that later.Īt Cloudflare we run NGINX, and we are most familiar with the (b) model. It can also help in better balancing the load. This can avoid listen socket contention, which isn't really an issue unless you run at Google scale. (c) Separate listen socket for each worker process By using the SO_REUSEPORT socket option it's possible to create a dedicated kernel data structure (the listen socket) for each worker process. This model enables some spreading of the inbound connections across multiple CPUs. Multiple worker processes are doing both the accept() calls and processing of the requests. (b) Single listen socket, multiple worker process The new connections sit in a single kernel data structure (the listen socket). This model is the preferred Lighttpd setup. A single worker process is doing both accept() calls to receive the new connections and processing of the requests themselves. (a) Single listen socket, single worker process This is the simplest model, where processing is limited to a single CPU. (c) Multiple worker processes, each with separate listen socket. (b) Single listen socket, multiple worker processes. (a) Single listen socket, single worker process. There are generally three ways of designing a TCP server with regard to performance: Increasing the number of worker processes is a great way to overcome a single CPU core bottleneck, but opens a whole new set of problems. This is a scalability model for many applications, including HTTP servers like Apache, NGINX or Lighttpd. When the need arises more worker processes are added. Most deployments start by using a single process setup. Scaling up TCP servers is usually straightforward.